Graviton3

For cloud-based computation, AWS offers several EC2 instance types and sizes, with various CPU architectures, processor speeds and sizes, allowing to select for each specific workload the most suitable instance option.

EC2 offers multiple generations of x86 processors by both Intel and AMD. AWS has also been building their own chips for the data center. They started with the chips powering Nitro cards and expanded that to machine learning chips with Inferentia and Tranium, and host compute with Graviton. Graviton processors use the Arm instruction set which is also found in phones, the latest generation of Apple Macs and the fastest super-computer in the world. The second generation Graviton2 was released in Dec 2019 and now powers 12 instance types which offer a 40% overall price-performance improvement over x86-based instances.

AWS has been rapidly iterating on Graviton processors, and at the end of 2021 they announced Graviton3. According to AWS, the 3rd generation of Graviton improved general purpose performance by 25% and expands the capabilities of Graviton processors to machine learning (up to 3x higher performance), and HPC (up to 2x higher performance). Graviton processors are also more power efficient with AWS stating that Graviton3 processors use up to 60% less energy vs other instance types for similar performance.

ESW Capital

ESW Capital is a portfolio of over a hundred enterprise software companies.

In 2008, ESW made a strategic choice to go all-in on AWS due to their rapid pace of innovation, reliability, and cost-effective performance. Over the years, ESW has benefitted from the growth of AWS services and allowed it to transform its enterprise portfolio into modern cloud-native solutions. The release of AWS Graviton3, empowers ESW Capital to dramatically reduce costs further without any tradeoffs on performance for its compute workloads.

ESW Capital’s centralized technical product management and engineering approach which focuses on turning AWS into a core pillar across its products allows it to leverage the vast majority of AWS managed services in concerted architectures at scale.

As soon as Graviton2 instances were released to general availability, we started migrating workloads to Graviton2 for both costs and performance reasons, including Kubernetes containers, AWS Lambda functions, and all Graviton2-supported AWS managed services across its products.

Moving to Graviton on AWS OpenSearch involved a mere change of the instance type with no change on any external interfaces in most products, thus realizing immediate cost benefits.

Language choices do make a difference in migration to the Arm processors. For JavaScript/Node.js and non-node-gyp modules it was just a question of running these containers on new architecture since their underlying runtimes were already Arm compatible. For languages like Go we had to recompile the code with GOARCH as an additional build parameter (Note: we highly recommend running the GO version 1.18 since it passes arguments in the registry and not in stack improving performance by as much as 15%).

Our flagship cost-optimization product, CloudFix, used in more than 45k AWS accounts, offers automatic conversion to Graviton2 for ElasticSearch / OpenSearch, ElastiCache, RDS including RDS Aurora, and will suggest these conversions to Graviton3 once generally available.

DevSpaces

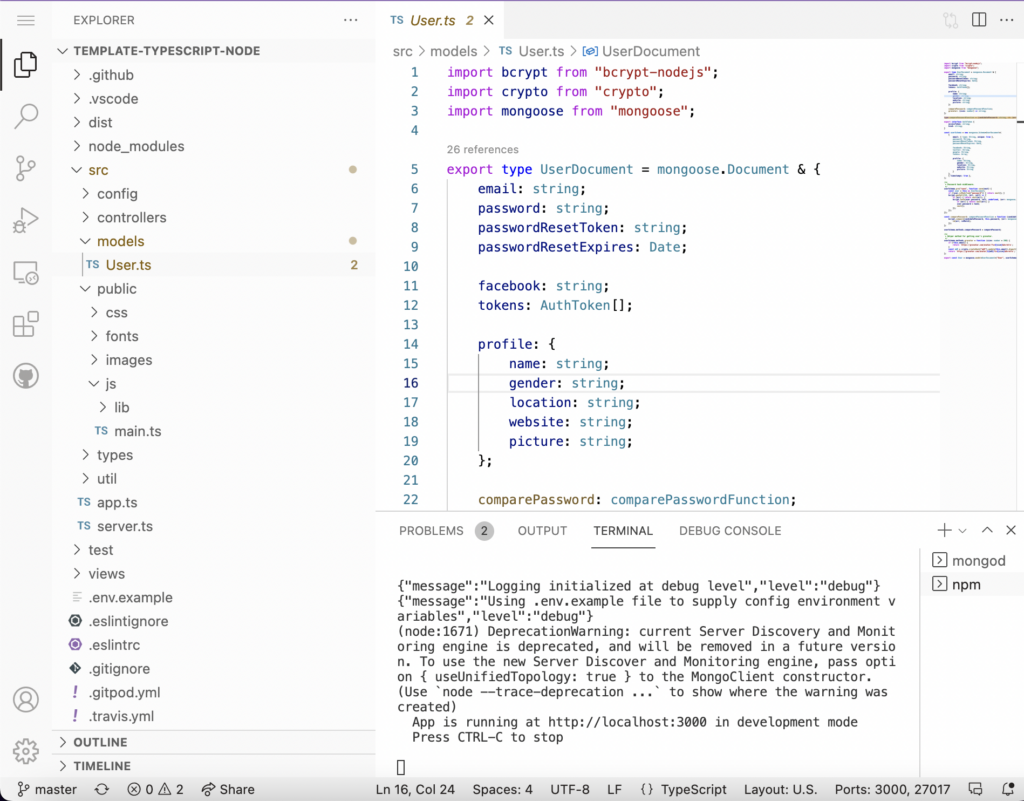

DevSpaces is a web-based, Visual Studio Code Experience environment built upon Gitpod with extensive team collaboration features for all major programming languages enabling rapid dockerization of your applications. It supports both x86 and Arm based development.

Once we realized that our applications were moving to Graviton, we found that we had a big gap in our development process. Most of our developers were still using X86 based development machines. We needed a development environment that was identical to the environment that the applications would be deployed in. DevSpaces for Graviton based development was created to bridge that gap.

With DevSpaces, developers can instantly set up their standardized team development environments and start working in just a few minutes. It supports code repositories in GitHub, BitBucket or GitLab, and one-click deployment to AWS ECS. DevSpaces internally uses EKS and individual dev environments as pods – each developer is allocated a single pod as his/her workspace.

Unlike vscode.dev, DevSpaces are a full desktop replacement, not just an IDE, and DevSpaces allow to define a custom dev environment with all dependent packages.

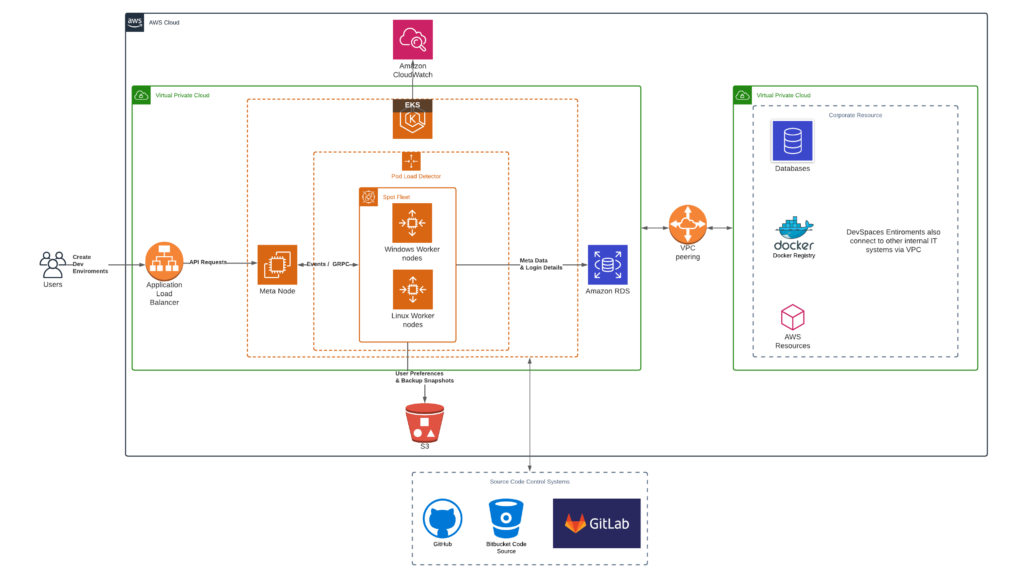

The AWS architecture of DevSpaces is as follows:

The base architecture relies on an open source solution that on connects to existing source control systems adapted for AWS:

- A meta node hosts all the services required for routing requests, authentication and OS selection.

- The actual Remote environments spawn in the worker nodes.

- For Linux, each dev environment gets a k8s pod and for windows a dedicated VM. The pods for x86, Graviton2, Graviton3 all have 1 vCPU 2G Ram, Max burst to 5vCPU 12GB RAM. This is the maximum specification Kubernetes will allocate.

- A load detector proactively and dynamically predicts the optimal node count and scales in and out as needed.

Migration of DevSpaces to Graviton

The principal question for anybody planning to do the x86 to Graviton migration is whether the cost savings of using cheaper Graviton instances is larger than the engineering cost of executing the migration.

In general, the larger the number of x86 instance types to be migrated to Graviton, the larger the cost savings – for example, it may not be worth migrating an application running on just one EC2 instance. On the other hand, the higher the complexity of a solution, the greater the migration effort is and the lower the cost savings – for example, if your solution depends on hand coded assembly, intrinsic or libraries not frequently used, the migration costs are likely to be substantially higher than if you are migrating just a standard Python application requiring virtually no migration effort.

In the ESW Capital case, we are innovators, so, naturally, we wanted to be among the first ones to try Graviton3. We realized that the number of EC2 instances running x86 has been so substantial that the migration of most of our workloads to Graviton has financially paid off – the savings were 15% to 25% of the Intel instances costs. In addition to the obvious cost advantages, the user satisfaction of our workloads has dramatically improved – Graviton’s vCPU being a physical core compared to SMT provided isolation and avoided the common “noisy neighbor” problems of shared infrastructure. The usual complaints around random slowness at peak times were never observed in Graviton clusters.

Graviton2 Migration Journey

DevSpaces were migrated to Graviton2 as soon as it was released to general availability and once we were able to provision enough Graviton2 instances.

Our original architecture was r5.8xlarge instance type, with the target Graviton instance being r6gd.8xlarge with a setting of 1 vCPU burstable to 5 and 2G RAM burstable to 16G.

Since we were ahead of the Graviton2 adoption curve, we have encountered the following problems whose solutions we share:

- Not all open-source components we depend on have Arm builds.

For example, the gRPC component in Node.js did not have Arm builds and still does not have. Therefore, we have ported it ourselves, and made the port open-source. - GitHub Actions used for build automations provide no support for managed Arm.

To overcome this limitation there are two possible solutions:- We could use QEMU for Arm build for C++ or Node-gyp builds – compare with this article.

- We could use self-hosted runners to create builds.

We chose the second solution and our choice proved to be the right one – the builds are substantially faster.

- Multi-architecture Docker Builds

When supporting multiple CPU architectures, it’s the best practice to build multi-architecture Docker images. This allows developers and production systems to pull the appropriate Docker image for the hardware they are running on with no code change. - Build Kubernetes (K8S) compatible Ubuntu Arm AMIs

When we migrated our workloads to Graviton2, there were no compatible Ubuntu images for K8S. We prefer Ubuntu over Amazon Linux because our solution uses the shifts kernel module responsible for uid/gid-shifting for containers. Therefore, we created custom Ubuntu AMIs to incorporate shifts changes. We also added support for containerd to default docker. This was later added by AWS as an optional parameter. - Parallelize the code as much as possible – Graviton3’s performance shines most in a multithreaded code

Graviton3 Migration Journey

All we really needed to do was change the instance types – the AMI’s and Docker images built for Graviton2 worked without any modification.

DevSpaces’ Benchmarking

DevSpaces is greatly valuable for benchmarking. The single DevSpaces are enclosed in containers with hard limits on compute, memory, and storage utilization, so if we test one on Graviton and one on x86, we won’t catch system noise and correctly compare architectures.

From the DevSpaces’ users perspective, the only relevant benchmark is the time saved for every single build – pure CPU benchmarking does not measure the improvements in their overall productivity. The time saved is material since it translates into hours per week.

We have measured the build times along with the time required for running key unit tests for some of our most complex software solutions:

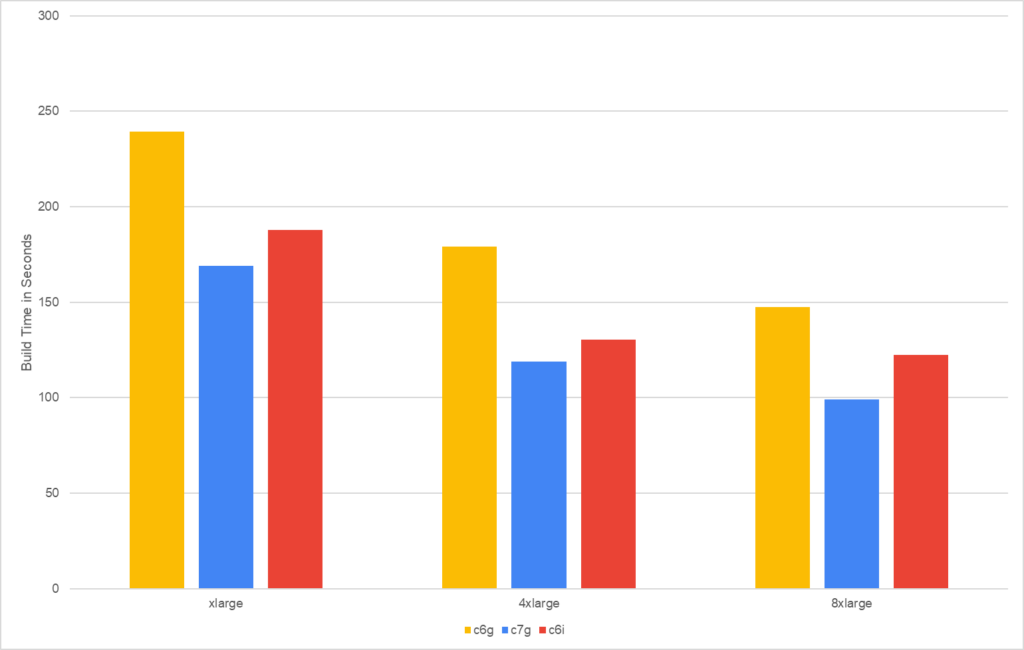

Sococo

Sococo Backend, a Node.js application, is an online virtual workplace environment for distributed teams.

The solution’s total build times are

- about 30-35% faster on c7g than on the corresponding c6g which translates into 25-30% cheaper build costs

- about 10-20% faster on c7g than on the corresponding c6i which translates into 22-30% cheaper build costs.

The cost/performance is

- 25-30% better on c7g compared to corresponding c6g

- 22-30% better on c7g compared to corresponding c6i

Conclusion: For Node.js applications we recommend using Graviton3 instances in every respect.

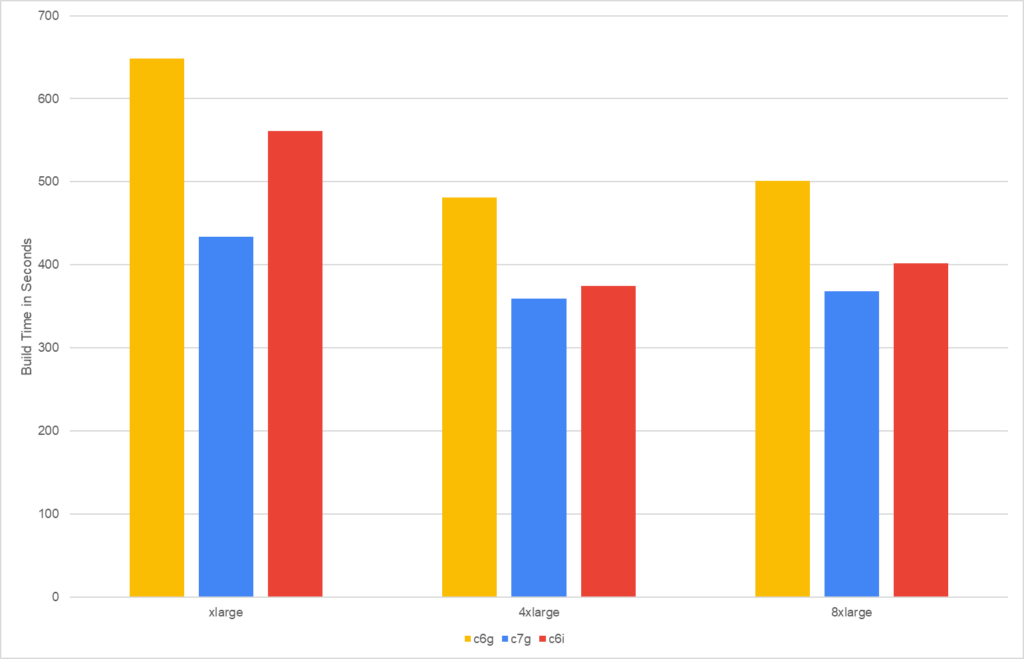

DevSpaces

DevSpaces, a Go application, is a software development workspace in a web browser – notice in this test we used DevSpaces to build DevSpaces!

The solution’s total build times are

- about 25-35% faster on c7g than on the corresponding c6g which translates into 22-30% cheaper build costs

- about 5-20% faster on c7g than on the corresponding c6i which translates into 18-35% cheaper build costs.

The cost/performance is

- 22-30% better on c7g compared to corresponding c6g

- 18-35% better on c7g compared to corresponding c6i

Conclusion: For Go applications we recommend using Graviton3 instances in every respect.

We do not recommend using largest instance types for building large Go applications since the build times exhibit a U curve caused most likely

- by the way Go deals with parallel builds

- by the way the large c7g instance handle Network and EBS bandwidth – the instances c7g.medium up to c7g.4xlarge have a baseline bandwidth for both network and EBS I/O throughput and can use network / EBS I/O credit mechanism to burst beyond their baseline bandwidth on the best-effort basis.

Lessons Learned

- Upgrading from to a newer version of Graviton was as straightforward as previous upgrades we’ve done with x86. As expected, newer features and instructions are enabled with newer operating systems so you should upgrade if you can, e.g. to Ubuntu 22.04, to ensure all workflows make full use of Graviton3.

- While Graviton3 dramatically improved performance of single-threaded applications over Graviton2, it shines best in heavily parallelized applications. The max level of parallelization then determines the optimal instance size – see the Go U curve described above.

- Switching

- from Graviton2 to Graviton3 delivers an instantaneous speed improvement of 20-35% while costing only about 6% more.

- from c6i to Graviton 3 delivers an instantaneous speed improvement of 5-20% and costs 20-35% less.

- Price/Performance ratios

- for Graviton3 compared to Graviton2 are 22-30% better

- for Graviton3 compared to c6i are 18-35% better.

Summary

The core value of DevSpaces is providing the best possible development environment to build and test complex software solutions that are run in AWS. We obtained the most intimate knowledge of all different compilers used by DevSpaces to route each particular language to a pod with the most optimal CPU type.

After more than four months of extensive testing, c7g instances positively shocked us by the responsiveness and performance of DevSpaces.

We are going to migrate all our production systems to c7g once it enters general availability.